Hollywood and big data

File transfers play a crucial role in filmmaking and video production. Raw footages and partially enhanced videos may have to cross vast distances to reach post-production teams or studio executives for further editing or review. Unfortunately, commonly used methods of sending these big files are either too slow, atrociously tedious, or ridiculously expensive. Let me share with you a better way.

When are file transfers needed?

In filmmaking or any type of video production, you normally collaborate with several outfits to produce a single movie or video.

Oftentimes after shooting, raw footage still has to be passed on to different post-production companies for video editing, ADR, visual effects, CGI, color correction, sound effects, musical score, and so on. After one team or company is done, the partially-edited file might have to be forwarded on to another who specializes in a different kind of editing.

For example, after the VFX team is done, they might forward their work to the music team. It’s possible that these people aren’t based in the same location – or even in the same country, for that matter.

There are also times when copies of your raw footage will have to be transported even in the middle of production stage. Like, if you’re shooting a multi-million dollar film abroad, your producers and studio executives back in HQ will understandably want to be updated of your progress. To keep them up to speed, you might have to send them dailies at the end of the day.

Lastly, if you want to distribute your content to foreign markets, you would have to transfer extremely large content to other continents.

Sadly, today’s file transfer technologies cannot efficiently deliver the large media files you normally work with. So how large are they?

Filmmaking’s big file transfer headache

During post-production stage, it’s possible for effects houses and other post-production outfits to move around media files tens of Gigabytes in size every day. The dailies you sometimes have to share with studio executives are going to be about this size as well.

The media files meant for distribution? They’re going to be even much much larger. Depending on the standard (e.g. 2K or 4K) and other parameters (e.g. framerate and color depth) used, a 1-hour uncompressed data file, can range from 0.5 to 3 Terabytes.

Let’s now see how long it would take to transfer files this big.

Painful ways of sending media

Let’s start with the dailies, which are somewhere between 10 to 30 GB. Let’s just assume we have a 20 GB file to transfer. Here are the estimated transfer times over some of the more common Internet access technologies.

Estimated transfer time of a 20 GB file

| Technologies | Bandwidths | ~Transfer times |

| T1 | 1.5 Mbps | 30 hours |

| T3 | 45 Mbps | 1 hour |

| Gigabit Ethernet | 1 Gbps | 3 minutes |

| 10 Gigabit Ethernet | 10 Gbps | 16 seconds |

Does this look acceptable? Only if you’re making the file transfer over at least a T3 connection.

As for your 2K files, here’s how transfers would look (assuming the file size is 1.5 terabytes) like.

Estimated transfer time of a 1.5 terabyte file

| Technologies | Bandwidths | Transfer times |

| T1 | 1.5 Mbps | 3 months |

| T3 | 45 Mbps | 3 days |

| Gigabit Ethernet | 1 Gbps | 3 hours |

| 10 Gigabit Ethernet | 10 Gbps | 20 minutes |

Imagine what file transfer times would be for 4K or 3D video files. Well, if you’re a large film studio, getting a 10 Gigabit Ethernet connection might not be a problem and can easily be justified.

But hold on. You haven’t heard the rest of the story yet.

Increasing your bandwidth may not be the answer

The bandwidths we indicated for each network technology are actually just their maximum capacities. Once you start transmitting over large distances, the actual throughput values would be much lower than those. For example, the actual throughput of a T3 line between LA, USA and Manila, Philippines would not be 45 Mbps. Rather, it could be as low as 5 Mbps.

If you spend tens of thousands of dollars a month for your dedicated Internet line and you only get 10% of what you pay for, I don’t think you’d be very happy.

This spectacular drop in throughput is largely due to three factors: latency, packet loss, and the nature of TCP. Latency and packet loss are network conditions that are unfortunately present in any wide area network. TCP (Transmission Control Protocol), on the other hand, is the network technology (or protocol) often used for transmitting data over the Internet.

Both HTTP, which we use to view web pages, and FTP, which we often use to transfer files, work on top of TCP. The problem lies in the fact that latency and packet loss can affect TCP in a big way.

While there’s nothing much you can do about latency and packet loss, you certainly can do something about the protocol you use for your file transfers. That’s exactly what we set out to do when we started developing AFTP, a TCP/UDP hybrid that’s virtually unaffected by latency and packet loss.

If you have to send big files over large distances, we encourage you to read the white paper How to Boost File Transfer Speeds 100x Without Increasing Your Bandwidth. It will help you understand the challenges you’re currently facing or about to face. In that paper, you’ll learn how to make full use of your Internet bandwidth and maximize your costs.

You can actually compare the difference between AFTP (accelerated file transfer protocol) and your current file transfer technology by giving AFTP a test run now. Start by downloading JSCAPE MFT Server and AnyClient. Both of which have free evaluation editions. For recent performance test results of AFTP see Accelerated File Transfer in Action.

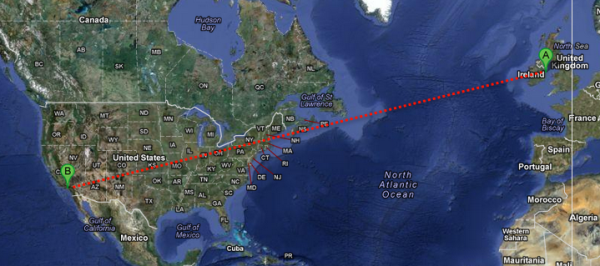

Install the managed file transfer server on one location and the client on another. In order to see the benefits of AFTP make sure to use a high-speed connection (>5Mbps in both directions) that suffers from latency and or packet loss. This is typical for many transcontinental, transoceanic and satellite connections. For example, if you have an office abroad, you can install the server there and the client here (or the other way around). Next, transfer a large movie file (maybe you can start with a few GB in size) and then observe the difference.

Done? Yeah, I can see your smile from here.

Summary

Poor network conditions can impede your post production workflow. Release your movie on time with AFTP.

Downloads

Download JSCAPE MFT Server Trial